List the ns (two trees mounted at /zx/abin, and /zx/xbin, nothing else mounted)

> ns

/ 0

/zx/abin 1

"" path:"/zx/abin" mode:"020000000755" type:"d" size:"3" name:"/" mtime:"1396356469000000000" addr:"tcp!Atlantis.local!8002!abin" proto:"zx finder" gc:"y"

/zx/xbin 1

"" path:"/zx/xbin" mode:"020000000755" type:"d" size:"1" name:"/" mtime:"1396356453000000000" addr:"tcp!Atlantis.local!8002!xbin" proto:"zx finder" gc:"y"

List just files at /zx

> l -l /zx

d-rwxrwx--- /zx/abin 3 01 Apr 14 14:47 CEST

d-rwxrwx--- /zx/xbin 1 01 Apr 14 14:47 CEST

List all files under / with mode 750

> l -l /,mode=0750

- 0-rwxr-x--- /zx/xbin/bin/Go 202 01 Apr 14 14:47 CEST

- 0-rwxr-x--- /zx/xbin/bin/Watch 2351948 01 Apr 14 14:47 CEST

- 0-rwxr-x--- /zx/xbin/bin/a 212 01 Apr 14 14:47 CEST

- 0-rwxr-x--- /zx/xbin/bin/cgo 2452606 01 Apr 14 14:47 CEST

- 0-rwxr-x--- /zx/xbin/bin/drawterm 837032 01 Apr 14 14:47 CEST

- 0-rwxr-x--- /zx/xbin/bin/drawterm.old 843880 01 Apr 14 14:47 CEST

- 0-rwxr-x--- /zx/xbin/bin/dt 60 01 Apr 14 14:47 CEST

- 0-rwxr-x--- /zx/xbin/bin/ebnflint 1149721 01 Apr 14 14:47 CEST

- 0-rwxr-x--- /zx/xbin/bin/gacc 32 01 Apr 14 14:47 CEST

- 0-rwxr-x--- /zx/xbin/bin/gnot 52 01 Apr 14 14:47 CEST

- 0-rwxr-x--- /zx/xbin/bin/hgpatch 1328046 01 Apr 14 14:47 CEST

- 0-rwxr-x--- /zx/xbin/bin/mango-doc 2957328 01 Apr 14 14:47 CEST

- 0-rwxr-x--- /zx/xbin/bin/nix 60 01 Apr 14 14:47 CEST

- 0-rwxr-x--- /zx/xbin/bin/quietgcc 1326 01 Apr 14 14:47 CEST

- 0-rwxr-x--- /zx/xbin/bin/t+ 23 01 Apr 14 14:47 CEST

- 0-rwxr-x--- /zx/xbin/bin/t- 22 01 Apr 14 14:47 CEST

Then chmod all of them to mode 755

> l -a /,mode=0750 | chmod 0755

How did chmod find out the files?

Here's how

> zl -a /zx

tcp!Atlantis.local!8002!abin /

tcp!Atlantis.local!8002!xbin /

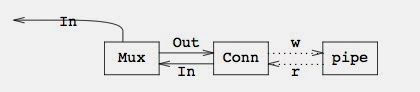

The interesting thing is that the names are separated from the files (i.e., a file server serves a finder interface to find out about directory entries, and then a file tree to serve operations on those entries).

Commands operate on finders to discover entries, and then use them to reach the servers and perform operations on them. Of course, connections to servers are cached so that we don't create a new one per directory entry, which would be silly.

All these requests are streamed through channels, as are the replies, so that you can do things like this:

dc := sh.files(true, args...)

n := 0

for d := range dc {

n++

// we could process +-rwx here using d["mode"] to

// build the new mode and then use just that one.

nd := zx.Dir{"mode": mode}

ec := rfs.Dir(d).Wstat(nd)

<-ec

err := cerror(ec)

if err != nil {

fmt.Printf("%s: %s\n", d["path"], err)

}

}

Here, the code is waiting for one reply at a time, but it could easily stream 50 at a time like another command does:

calls := make(chan chan error, 50)

for i := len(ents)-1; i >=0; i-- {

ec := rfs.Dir(ents[i]).Remove()

select {

case calls <- ec:

case one := <-calls:

<-one

err := cerror(one)

if err != nil {

fmt.Printf("%s\n", err)

}

}

}