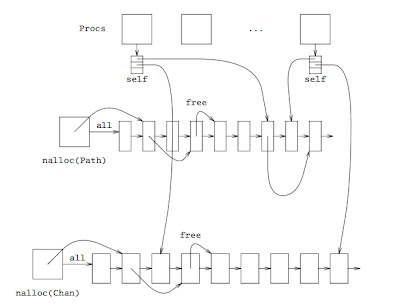

This TR from the Lsub papers page describes the Nix allocator and provides a bit of initial evaluation for it. In short, half of the times allocation of resources could be done by the allocating process without interlocking with other processes or cores and without disturbing any other system component. Plus other benefits described in the TR.

Here is a picture of the allocator as a teaser:

Quick notes regarding system software issues, references to related work, ideas for future work, and any interesting result along the way. See the lsub web site.

Showing posts with label multicore. Show all posts

Showing posts with label multicore. Show all posts

Saturday, July 13, 2013

Wednesday, January 25, 2012

Plots for SMP, AMP, and benchmarks

Recently I posted here regarding SMP, AMP, and benchmarks. That post discussed the results for measuring an actual build of the kernel, and a pseudo-build (where a benchmark program created concurrent processes for all compilations at once). There are significant differences, because the later is closer to a microbenchmark and hides effects found in the real world load.

These are the plots.

These are the plots.

- Time to compile using mk (which spawns concurrent compilations). The old scheduler is SMP, the new one is AMP.

- Time to compile using pmk (with the source tree hosted in ram), a fake build tool just for this benchmark. It spawns all compilations at once and then waits for all of them.

Draw your own conclussions. No wonder a micro-benchmark could be designed such that AMP always achieves a speed up as we add more cores. It's a pity that in the real world there are always dependencies between independent things.

Is NUMA relevant?

We made an experiment, running a program that spawns as many compiler processes as needed to compile the kernel source files in parallel. This uses the new AMP scheduler, where core 0 assigns processes to other cores for execution.

To see the effect of NUMA in the 32 core machine used for testing, we timed this program for all number of cores: first, allocating memory from the domain local to the process; second, allocating it always from the last memory domain.

Thus, if NUMA effects are significant, it must show up in the plot. This is it:

For less than 5 cores there is a visible difference. Using a NUMA aware allocator results in lower times, as it could be expected. However, for more cores there is no significant difference, which means that NUMA effects are irrelevant on this system and this load (actually, the NUMA allocator is a little bit worse).

The probable reason is that when using more cores the system seems to tradeoff load-balancing of processes among cores and memory locality. Although all memory is allocated from the right color, the pages kept in the cache already have a color, as does kernel memory. Using processors from other colors comes at the expense of using remote memory, which means that in the end it does not matter much where do you allocate memory.

If each process relied on a different binary, results might be different. However, in practice, the same few binaries are used most of the time (the shell, common file tools, compilers, etc.).

Thus, is NUMA still relevant for this kind of machine and workload?

As provocative as this question might be...

Tuesday, January 24, 2012

SMP, AMP, and benchmarks

The new AMP scheduler for NIX can keep up with loads resulting from actual compilation of the system software. Until 16 cores are added, a real speed up is obtained. From there on, contention on other resources result in times not improving, and even slowing down a bit.

With the old SMP scheduler, when more than about 6 cores are used, times get worse fast. The curve is almost exponential, because of a high contention on the scheduler.

That was using a system load from the real world. As an experiment, we measured also what happen to a program similar to mk. This program spawns concurrently as many processes as needed to compile all the source files. But it's not a real build, because no dependencies are taken into account, no code is generated by running scripts, etc.

For this test, which is half-way between a real program and a micro-benchmark, the results differ. Instead of getting much worse, SMP keeps its time when more than 4 cores are added. Also, AMP achieves about a 30% or 40% of speedup.

Thus, you might infer from this benchmark that SMP is ok for building software with 32 cores and that AMP may indeed speed it up. However, for the actual build process, SMP is not ok, but much slower. And AMP is not achieving any speed up when you reach 32 cores, you better run with 16 cores or less.

There are lies, damn lies, and microbenchmarks.

With the old SMP scheduler, when more than about 6 cores are used, times get worse fast. The curve is almost exponential, because of a high contention on the scheduler.

That was using a system load from the real world. As an experiment, we measured also what happen to a program similar to mk. This program spawns concurrently as many processes as needed to compile all the source files. But it's not a real build, because no dependencies are taken into account, no code is generated by running scripts, etc.

For this test, which is half-way between a real program and a micro-benchmark, the results differ. Instead of getting much worse, SMP keeps its time when more than 4 cores are added. Also, AMP achieves about a 30% or 40% of speedup.

Thus, you might infer from this benchmark that SMP is ok for building software with 32 cores and that AMP may indeed speed it up. However, for the actual build process, SMP is not ok, but much slower. And AMP is not achieving any speed up when you reach 32 cores, you better run with 16 cores or less.

There are lies, damn lies, and microbenchmarks.

Saturday, January 21, 2012

AMP better than SMP for 32 cores

After some performance measurement, we have found that AMP performs better than SMP at least for some real-world workloads, like making a kernel.

It seems that the scheduler interference when enough cores are present is enough to slow down the system. Sparing a core just to schedule tasks to other cores (and handle interrupts) makes the system as a whole faster.

These are the times for the experiments of making a kernel, copying a file tree from ram to ram, and making a kernel with its source tree in ram. The first two rows are taken from a previous experiment also reported in this blog. We did re-run that experiment to compare with AMP because it depends on the state of the network, which may differ.

14.0344 1.921 10.458 single sched. 32 cores.

10.789 0.608 5.073 single sched. 4 cores.

12.8 2.20 9.23 rerun of single sched, 32 cores.

10.17 0.995 5.775 AMP sched, 32 cores.

It seems that the scheduler interference when enough cores are present is enough to slow down the system. Sparing a core just to schedule tasks to other cores (and handle interrupts) makes the system as a whole faster.

These are the times for the experiments of making a kernel, copying a file tree from ram to ram, and making a kernel with its source tree in ram. The first two rows are taken from a previous experiment also reported in this blog. We did re-run that experiment to compare with AMP because it depends on the state of the network, which may differ.

14.0344 1.921 10.458 single sched. 32 cores.

10.789 0.608 5.073 single sched. 4 cores.

12.8 2.20 9.23 rerun of single sched, 32 cores.

10.17 0.995 5.775 AMP sched, 32 cores.

Monday, May 2, 2011

Systems for multicore computers

With so many cores in modern machines, it is time to rethink how the system should handle them.

There are interesting models, like the multikernel, published in the literature. However, it seems that a simpler way must exist.

For example, we can make some cores execute just user code, with no interrupts, and no round robin. We can make other cores execute system code.

There are interesting models, like the multikernel, published in the literature. However, it seems that a simpler way must exist.

For example, we can make some cores execute just user code, with no interrupts, and no round robin. We can make other cores execute system code.

Subscribe to:

Posts (Atom)